Toronto police used Clearview AI facial recognition software in 84 investigations

Toronto police used Clearview AI facial recognition software to try to identify suspects, victims and witnesses in 84 criminal investigations in the three and a half months officers utilized the controversial technology before their police chief found out and ordered them to stop.

The revelations are contained in an internal police document recently obtained by CBC News through an appeal of an access to information request.

Between October 2019 and early February 2020, officers uploaded more than 2,800 photos to the U.S. company’s software to look for a match among the three billion images Clearview AI extracted from public websites, such as Facebook and Instagram, to build its database.

Toronto police first admitted that some of its officers used Clearview AI in mid-February 2020, one month after the service denied using it. But until now, no details around how — and to what extent — officers used the facial recognition software have been released.

The internal report shows how detectives from multiple units started to use a free trial of Clearview AI to advance criminal investigations without consulting anyone other than the company itself and internal supervisors about the legality and accuracy of the technology.

“When you’re enforcing the law, your first obligation is to comply with it,” said Brenda McPhail, director of the privacy, technology and surveillance program at the Canadian Civil Liberties Association (CCLA). “It really doesn’t seem like that was top of mind as the concept of this tool, as the examples of this tool, as the conversations about this tool circulated through the force.”

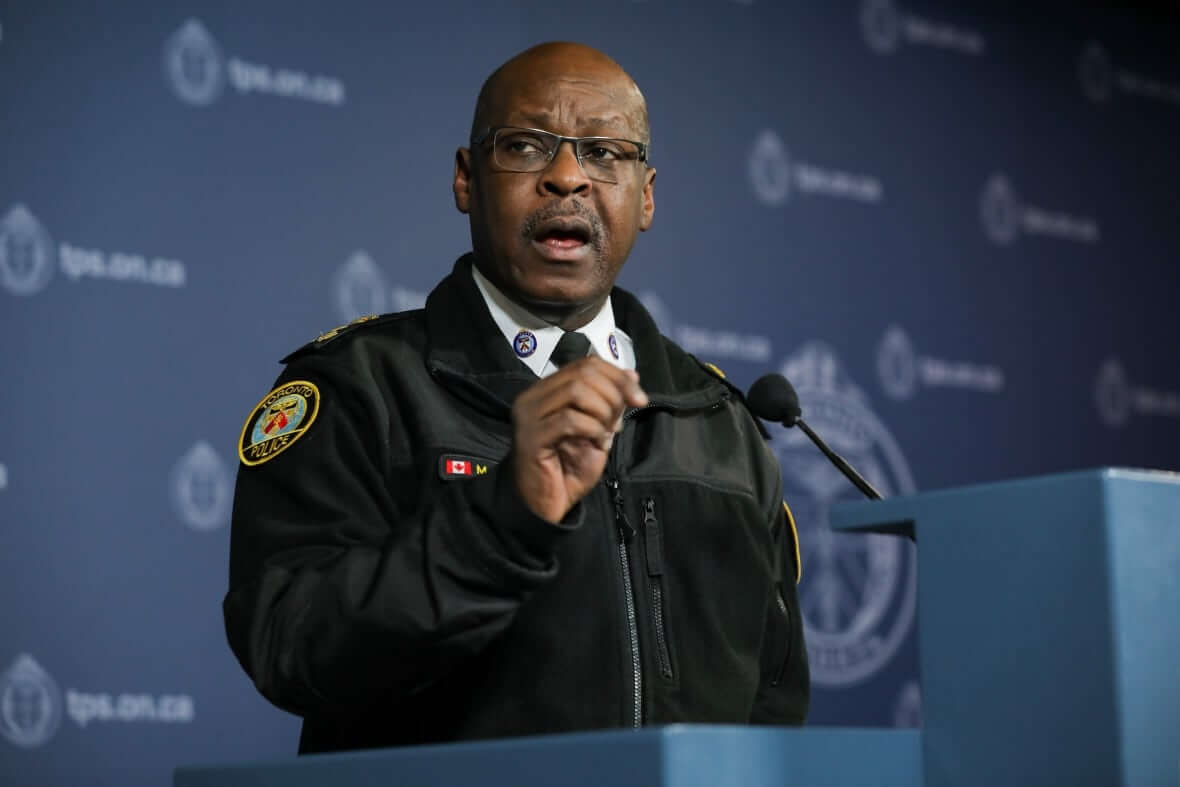

According to the report, detectives using the technology only met with Crown attorneys about Clearview AI after a New York Times investigation in January 2020 revealed details of how the company compiled its database and its use by more than 600 law enforcement agencies in Canada, the United States and elsewhere. Soon after, then-Toronto police chief Mark Saunders was informed that his officers were using the software and ordered them to stop on Feb. 5.

Since then, four Canadian privacy commissioners have determined that Clearview AI conducted mass surveillance and broke Canadian privacy laws by collecting photos of Canadians without their knowledge or consent.

Criminal cases could be in jeopardy

Given those findings, the co-chair of the Criminal Lawyers’ Association’s criminal law and technology committee says the police service’s lack of due diligence before using Clearview AI could put cases where it was used at risk.

“If police violated the law as part of their investigations, this could make those investigations vulnerable to charter challenges,” said Eric Neubauer, a Toronto lawyer.

“We have the right in Canada to be free from unreasonable search and seizure — one could conceivably see an argument being brought in court that this was a fairly profound violation of that right.”

So far, Neubauer and McPhail say they haven’t seen a Canadian example of the software’s use face legal scrutiny in court. That said, there were already two Toronto cases before the courts based at least in part on evidence that officers generated through the use of Clearview AI in March 2020, according to the report.

Of the 84 criminal investigations where searches were completed, the report says that 25 were advanced through Clearview AI, with investigators identifying or confirming the whereabouts of four suspects, 12 victims and two witnesses.

No plans to use Clearview AI: Toronto police

In a statement, Toronto police spokesperson Connie Osborne told CBC News that the service has no plans to use Clearview AI again.

“The Toronto Police Services Board is currently developing a policy for the use of artificial intelligence technology and machine learning following public consultation,” Osborne said.

“The service is also developing a robust procedure for the adoption of new technology to ensure governance of procurement and any potential use is compliant with the relevant laws, including privacy requirements.”

The proposed AI technology policy would establish five risk-based categories for technology ranging from minimal to extreme risk. “A facial recognition software with illegally sourced data that could result in mass surveillance” is listed as an example of extreme risk technology on the board’s public consultation web page — which would not be allowed for use under the proposed police policy.

Detective introduced to Clearview AI at conference

According to the report obtained by CBC News, Toronto police were first introduced to Clearview AI at a victim identification conference in the Netherlands in October 2019.

While there, a detective attended an FBI and U.S. Department of Homeland Security showcase of the technology as an investigative tool in identifying exploited children online — and also used Clearview AI in connection with real child exploitation investigations.

Within days of returning to Toronto, the service obtained a free trial of Clearview AI. By the end of October, investigators from both the child exploitation and intelligence services were using the technology. By mid-December 2019, an internal showcase of Clearview AI was held for roughly 100 investigators from sex crimes, homicide and financial crimes units.

In the report, the details are redacted for all but one investigation where officers used Clearview AI: the third homicide of 2020 was advanced through the use of the technology and an arrest was made. Although the report doesn’t specify, it was the suspect who was identified through the software.

Toronto police confirmed with CBC News that the victim in that case was Maryna Kudzianiuk. The 49-year-old died in hospital after firefighters found her while responding to a Scarborough highrise fire in January 2020.

After an autopsy, police ruled her death a homicide, and about a week later a man was arrested and charged with first-degree murder.

‘A slippery slope’

While examples like that might show the benefits of the technology to help solve — or stop — the most serious crimes, the CCLA’s McPhail says that “it’s such a slippery slope.”

She told CBC News that child exploitation is often used to legitimize invasive technology, such as that sold by Clearview AI, by claiming “one is too many,” without focusing on all of the images of children scooped up non-consensually.

“The faces of our kids — if we’ve put up their photo on Facebook for Grandma — end up in a police lineup that can be searched by police in Canada, by police in the U.S., by police in Europe,” McPhail said. “What is the risk to all of the children proportionate to the benefit to the few children?”

McPhail and Neubauer also raised concerns about the accuracy of Clearview AI’s software.

“This technology has been found to have higher incidences of false positives or misidentifications for faces of people of colour,” Neubauer said. “This means more investigations, potentially detentions and arrests of individuals in marginalized groups who already face disproportionate levels of arrest, investigation and detention.”

Given those issues, the Toronto lawyer stresses the importance of studying emerging technologies before using them in real cases going forward.

“These tools used by law enforcement must be subject to public and legal scrutiny and oversight,” he said. “That means full transparency as to when it’s being used and also how it works.”

Provincial watchdogs issue order to delete photos

Clearview AI left the Canadian market in the summer of 2020. But concerns around the images the company has collected remain.

Earlier this month, the provincial privacy watchdogs for British Columbia, Alberta and Quebec ordered Clearview AI to delete images and biometric data collected without permission.

Clearview AI previously told the B.C. privacy commissioner that it was “simply not possible” for the company to identify whether individuals in photos were in Canada at the time the image was taken or whether they were Canadian citizens or residents.

The Information and Privacy Commissioner of Ontario says it doesn’t have jurisdiction over private companies such as Clearview AI — the way privacy watchdogs do in B.C., Alberta and Quebec — because Ontario doesn’t have a private-sector privacy law.

But Ontario’s privacy commissioner is working with its federal and provincial counterparts to develop guidance on the use of facial recognition technologies by police, which the office says will be released next year.

Redes Sociais - Comentários